"I didn't grow up dreaming of prompting." [Part 3]

Part 3: What's left? What's the upside?

This post is part of a series: Part 1 | Part 2 | Part 3

As promised, I’ve put in the (virtual) shoe leather cost to offer some thoughts over how to use the tools, the pros and cons, some of the most obvious pitfalls, and what’s left for creative work.

Hopefully this is the most positive section of the post series—I certainly meant it that way. Remember, that even when it looks like you might not, you always have a choice. Whether to participate, how to participate, and what your lines are.

5. A Tolerable Engineering Workflow

A provocative title, but hopefully this section will prove relatively optimistic.

As part of the research for this post, I reached out to most of my friends and former colleagues to get a sense of who was using the tools in what way. The idea that human computer interfaces have fundamentally shifted is interesting, but it seems too maxi, and isn’t reflected in day-to-day of the people I talked to.

Few-to-no experienced engineers are actually “vibe coding” production code. Instead, there’s a huge spread of heuristics for developing confidence1 in the AI output.

A common response was what one friend summarized as, “use the ideas, not the output.” That is, potentially don’t even have the tools integrated directly into your workflow, but have them near-to-hand, to prompt, pair with, bounce ideas, and gain context.

“It’s a powerful tool in the hands of an expert,

and a chainsaw in a kindergarten for others”

However, some take it much further. As part of this exploration, I asked the best engineer I’ve ever worked with (a former CTO of mine) whether they were using AI tools, and what their workflow was. It turns out the answer is yes, and the workflow they described to me is what I’d call very sensible.

They first generate and then iterate a spec document that multiple agents can read, using beads. They observe that a clear plan is crucial, “LLMs are mostly great for helping with stuff you already understand…but they do surprising stuff all the time…”

Then they iterate.

“An iterative process of

describe the next step, maybe ask the LLM to research similar things in other languages

Have the LLM implement it

Review and iterate

On a couple of occasions, decide it was

Unsalvageable, or

A bad experiment

Then throw it away to either start again or go in a different direction

It wasn’t a quick process, but it was a lot quicker than doing it the old fashioned way… the LLM interaction process actually led to me finding and exploring things I didn’t set out to do.”

What’s most interesting is that they are solving what I would call Hard Problems—in this case writing an Algebraic Effects library in Elixir. Moreover, they say that the output is better than they could have achieved by themselves. One of the main benefits they identify is not only the speed of development, but also an ability to discard work, in light of a lower emotional investment in the code generated. This is also something my former colleague Paul mentioned.

In other words, the cost of iteration has been reduced.

Something to note about this person that seems to be really succeeding with the tooling is that they are a domain expert. They’ve been iterating solutions in this space for a decade and know what questions to ask, in addition to having a strong mental model of the problem space and grokking the key desiderata.

If you don’t have that, you might be in trouble. As they say,

“Mixed in with all the great, often it’s just stupid, wrong, or both—it doesn’t actually understand anything and it has no aesthetic sense beyond reversion to the mean, which makes it a powerful tool in the hands of an expert, and a chainsaw in a kindergarten for many other users.”

The idea of using the agent just to research for you is already a pretty valuable task—and verifying its suggestions aren’t crazy is relatively fast compared to say, parsing code. Gergely Orosz says HashiCorp founder Mitchell Hashimoto, “always [has] an agent running in the background doing something. He kicks off tasks before leaving the house — research, edge-case analysis, library comparisons — so work progresses while he drives or is away.” There’s a good quote from Mitchell on that episode of the Pragmatic Engineer podcast:

“There’s a lot of people like, ‘I don’t want it to write code for me.’ But just delegate some of the research part.” He uses agents for library comparisons, edge-case analysis, and deep research—not just code generation. “You don’t need to pick up on the ‘it must replace you as a person’ kind of propaganda.”

For what it’s worth, this is kind of where I am currently. So naturally, confirmation bias kicks in and I think this is a Great Take.

Returning to the cost of iteration, Will Faithfull, another Northern technologist—whose blog you should read—argues this is why a 50:50 split of features to refactoring is needed when using these tools. It’s easy to get carried away, and the tools have no aesthetic taste, beyond “whatever patterns they encounter; from the codebase, from the current session, from their own prior output.”

Why 50/50? We’ve anecdotally observed that AI productivity decays over the lifetime of a project, and that the rate of decay is strongly influenced by codebase quality and complexity. Complexity grows unavoidably as a project matures; there’s not lots you can do about that. What you can control is quality, and that determines how steep the decline is. What drives this decay? Pattern amplification.

It’s worth saying that some working in AI claim that the agents do now have aesthetic taste. However, one of the models mentioned there is the one my former boss is using. I trust their judgement more. Moreover, that statement is couched in a piece that is (a) pure ‘Criti-hype’ fearmongering, and (b) by somebody that works in AI. Caveat emptor.2

Finally, back to Will’s point on diminishing returns: I think there’s likely an inverse relationship to TCO, depending on how good your decisions are about when to ‘do the work’ and accrue domain knowledge, versus when to offload that work to an AI tool. Lack of understanding of the domain, or codebase, represents a hidden cost that isn’t accounted for in the cost of production or sale—something in economics we’d call an externality.3

The cost of iteration has been reduced,

but it is important to keep in mind the Total Cost.

At a meta-level, there’s a cross-project version of this externality—deskilling. If the argument that users of AI tools gradually deskill holds true, then you would expect this externality related cost to grow across all your projects.4 The more your team uses the tools, the worse they perform, over time, across all work—unless you have a way of maintaining their skill base. Needless to say, this is a hard problem.

As discussed in Part 2 in the context of organisational design, what Stuart Winter-Tear calls Speeding One Cog Breaks the Machine5 is a serious risk in software projects. If the process improvement isn’t holistic, all that happens is you move the bottleneck point within a meta system or process and flood somewhere else.

Anecdotally, that point seems to be code review, and I’ve personally seen not only code review, but often additional post-hoc sense checks of code have become necessary. That’s a big, time-consuming piece of work.

Also key to this all working in the long-term is a missing part of the current picture—completely open-source AI models, governed in a way we can trust.6 If you optimise a workflow on top of technology that will eat our societies then you’re still rearranging the proverbial deck chairs on the Titanic.

This is a super hard problem and I’m not sure I have any answers at all, let alone any easy ones.

6. You’ll Own Nothing, and You’ll Be An Start-Up

This is pretty short, because the argument is simple. Integrating AI at the heart of your business venture is likely not a good idea unless you’re a large business, an incubent, or operate in an area with high barriers to entry.

If you build your business on somebody else’s model, then you are accruing wealth, power and money to their platform. If your business fundamentally requires their model, then you’re in even tougher straights. You have no USP, you have no real barrier to entry, and perhaps you have no business.

Think of the Commandment of Control and Commandment of Entry, which I’ve discussed before, and the point should become clear. You’re not a SaaS product or a platform product, if your start-up depends on an AI model, as other writers have noted; you’re a margins player, and your business can be disrupted by a new competitor at any time, for a low cost.

7. A Manifesto for Non-Participation

As should probably be pretty clear by this point, I think there’s a moral, political and creative imperative to resist, so far as is practical, the use of AI tools in the creative process.

I’m more of a pragmatist when it comes to the world of work, but I’m also not jazzed about the level of asymmetric power at play. I’m happy to use the tools to a point, but by doing so feel complicit in a political and ideological programme that is toxic. I don’t know how to square that circle.

To take it back to a reference from the start of this piece, this asymmetric power recalls the plight of weavers at the advent of the Industrial Revolution. I believe my name is French Huguenot, so maybe I have a bone-deep latent sympathy with the weavers and Luddites for that reason, I don’t know.

Non-participation is an act of resistance.

You might be in the situation where if you completely reject AI tooling, you might lose your job or face sanctions—I’m, to be honest, in a similar situation in my profession.7 So don’t get fired, but don’t blindly accept, either.8 Remember, you always have a choice.

At the very least, non-participation is an act of resistance. Non-participation is also an optimistic act. As far as I can tell, many people kind of hate AI, but go along with using it out of fear.9

AI enthusiasts and the e/acc crowd can beat you over the head with non-participation and can you a Luddite.10 However, as I’ve said, this is an optimistic act. It’s doubly so when you combine it with a call to arms for creativity.

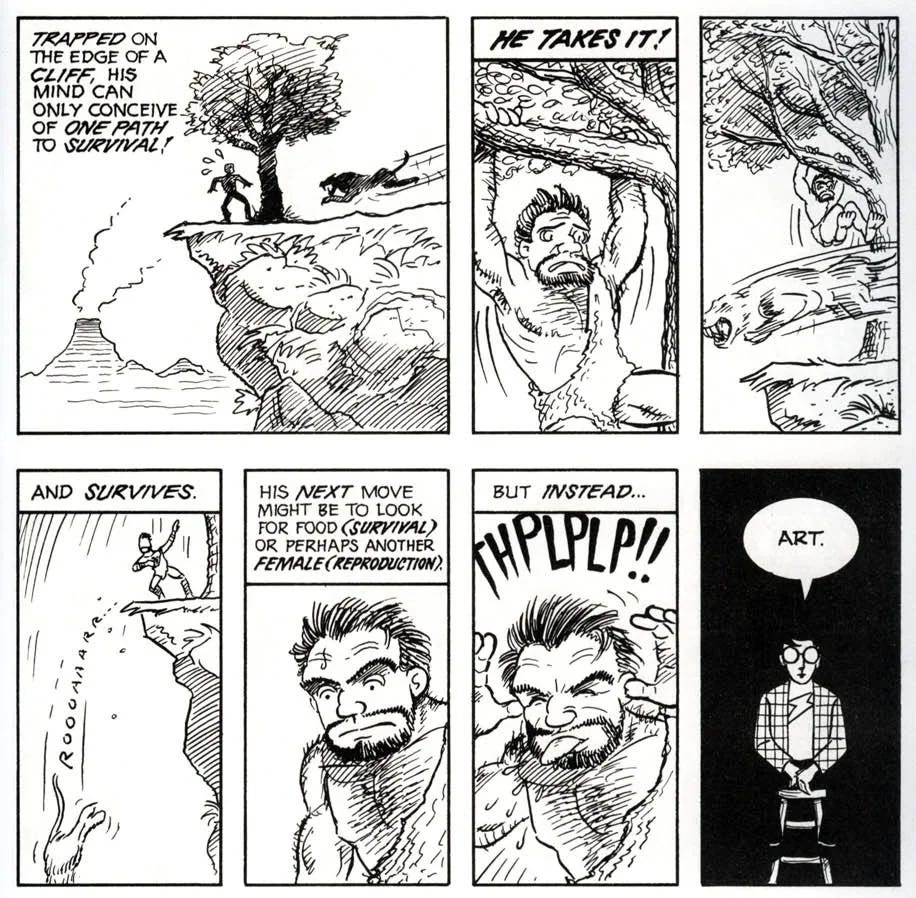

Nobody can tell you not to create. Every scribble is art. In Understanding Comics,11 Scott McCloud advances possibly the best definition for art I’ve ever seen:12

“Any human activity which doesn't grow out of either of our species' two basic instincts: survival and reproduction.”

Even then, this might be too narrow!

Don’t ask for permission. Create. Your work is valid. Your thoughts are valid, your aesthetic is valid.

Don’t replace yourself. Don’t deskill yourself. Don’t empoverish yourself. Do the hard thing because that is all there is to life.

Life, like creative suffering, is what you make of it.

Acknowledgements: Thanks to the many people that fed back on earlier or draft versions of this series, including but not limited to: Jon Stone, Craig McMillan, and Rob Bowley. Cheers for the conversations while I worked out shower thoughts to Andy Gray, Geoff Goodell, James Morgan, David Scott, David Alesch and Jack Gray. Thanks also to all my network that I have bugged about AI tooling, workflows, best practice in their places of employment and for opinions. I hope I’ve done your thoughts and feedback justice.

Have to be an academic pedant here and say that I’m using ‘confidence’ as a stand-in for what Williamson (1993) would call ‘Trust’, using Earle via De Filippi et al. (2020) to add a time dimension and re-frame it as confidence: “confidence is generally associated with a feeling of predictability.” I’m also not limiting myself to the Williamson/Earle/De Filippi tendency to see trust or confidence as only calculative; especially where AI is concerned, it is both non-calculative and calculative (i.e. perhaps not strictly rational). Final thought, in this already excessive footnote: often confidence in the context of novel technology or socio-technical systems is secured by emergent regulation (bottom-up or top-down, Williamson, 1993), “expert systems” such as the legal system or guilds (De Filippi, et al., 2020) or the implicit threat of the state (Curry, 2024). How many of these are operative, and how, in either (a) how we govern AI in the large (i.e. at the granularity of a country), or (b) how we govern AI in the small (i.e. at the granularity of a workplace).

Every generation has a religious or social movement that suggests the end of the world will come in their lifetimes. I think on some level pieces like this play into this emotional tendency humans have. AGI might be equivalent in power to the atomic bomb—I strongly doubt “big guessing machines,” sorry, LLMs, are in the same category. They seem like better, spookier machine learning to me. Or faster horses.

The cost plus externalities adds up to what is commonly called the ‘Social Cost’ or ‘Total Cost.’

As an aside, if you’re an engineer, you could, like the characters in Shaun of the Dead, “go to the Winchester, have a nice cold pint, and wait for all of this to blow over.” Sooner or later everybody will have deskilled and you’ll be the one-eyed dev in the land of the blind.

Or the System Decoder post, ‘Speed Is Not A Strategy, It Is Just Faster Chaos.’

To be clear, although the current gap is wide, smart, motivated, and in most cases well-meaning people are currently working hard on building these.

Red-hot take: you should probably have the right to refuse to use AI tooling at work.

Even if the AI writes a hit piece on you.

Except, as I’ve noted, where people are using it to do genuinely super-tedious low value stuff, or make memes.

Or a ‘decel,’ which is a pretty pathetic insult, as far as they go. One might rebut and point out that most users of that term are f—king philistines, but that would be beneath me (oops). As the Oscar Wilde quote goes, these are guys that know “the price of everything and the value of nothing,” except that given they’re pushing a broken economic programme, it seems they actually know “the price of nothing and the value of nothing.” Which is substitutable for “nothing.”

Absolutely, undoubtedly one of the best books ever written on any subject, in my humble opinion.

I’ve thought about this a lot, and I think it’s likely for one simple reason—it’s more or less the broadest definition I’ve ever seen.